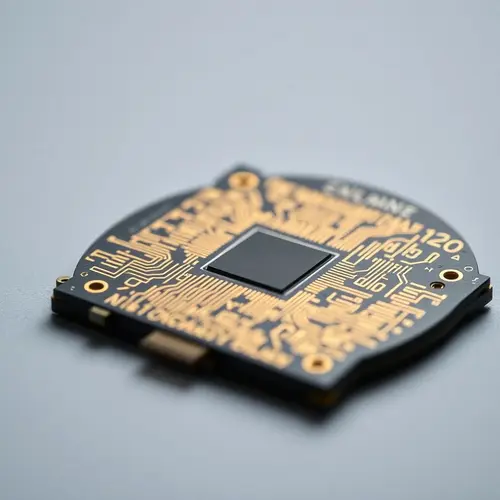

Exploring the intricate designs of next-generation semiconductor technology.

Nvidia AI Inference Chip Targets Real-Time AI Processing

Nvidia (NVDA) is preparing to introduce a new processor. This chip aims to speed up how AI systems respond to users. Its core function focuses on inference computing.

The company is expected to unveil this at the upcoming Nvidia GTC conference next month. This Nvidia AI inference chip holds significance in the evolving AI landscape.

What Happened

Nvidia reportedly plans to unveil a processor specifically built for inference computing. This new chip differs from Nvidia’s traditional chips.

Traditional chips are typically used for training large AI models. The announcement is expected at the Nvidia GTC conference next month.

Details From Sources

Definition of Inference Computing

Inference computing is the stage where an AI system answers questions. It also writes code or carries out tasks after its model has been built.

Market Shift

Current market demand is shifting toward running AI models in everyday applications. This necessitates chips that provide quick answers with less power.

Potential Customer

Reports indicate OpenAI could become a major customer for this system. It aims to improve the speed of its tools through such systems. This is part of a broader agreement context for OpenAI’s deals.

Competitive Landscape

The AI chip market competition is rising. Large tech companies like Alphabet and Amazon are building their own AI chips. This reduces their reliance on Nvidia.

Other Competitors

Smaller startups are also creating processors. These are mainly for inference tasks.

Why This Matters

Faster AI inference hardware is critical for businesses. It helps them deploy AI tools for applications like coding, search, and customer service.

The new Nvidia AI inference chip addresses the need for efficient, real-time AI processing. This fulfills growing demands in the AI sector.

Background Context

Nvidia has historically dominated with graphics processors. These are used primarily in training large AI models.

The current AI trend involves a greater need. This is for running models efficiently post-training.

Industry Reactions

OpenAI plans to purchase “dedicated inference capacity” from Nvidia. It also signed a deal to use Amazon Trainium chips. This illustrates exploring multiple suppliers for AI computing needs.

Other major tech companies are developing in-house chips. They aim to lessen dependence on Nvidia’s systems. Amazon is eyeing in-house chips to cut AI costs.

Related Data or Statistics

Nvidia Stock Performance

NVDA stock has a Strong Buy Consensus rating. This is based on 37 Buys, one Hold, and one Sell rating.

Price Target

The average Nvidia price target is $273.38. This implies 54.29% upside potential from current levels.

Recent Growth

NVDA shares have surged nearly 42% over the past year.

Future Implications (SPECULATIVE)

This Nvidia processor development is a strategic move. It helps the company maintain its lead in the AI chip market. This could impact AI as it shifts from model building to actual application execution and real-time AI processing services.

Conclusion

Nvidia is broadening its product lineup. It introduces a dedicated Nvidia AI inference chip. This move is significant amid increasing demand for real-time AI processing. It also addresses heightened AI chip market competition.

FAQ Section

Q1: What is Nvidia’s new AI chip designed for?

A1: Nvidia’s new processor is designed to speed up how AI systems respond to users, specifically focusing on inference computing for faster responses.

Q2: What is inference computing?

A2: Inference computing is the stage where an AI system provides answers, writes code, or performs tasks after its underlying model has been built and trained.

Q3: Where is Nvidia expected to reveal this new chip?

A3: Nvidia is expected to reveal the new processor at its upcoming GTC developer conference.

Q4: How does this new chip differ from Nvidia’s traditional AI chips?

A4: Unlike Nvidia’s traditional chips used for training large AI models, the new processor specifically targets inference computing to handle real-time AI responses more efficiently.

Q5: Is Nvidia facing competition in the AI chip market for inference tasks?

A5: Yes, competition is rising, with companies like Alphabet and Amazon developing their own AI chips, and smaller startups also creating processors for inference tasks.